Resurrecting Memories: The Ethics of AI Afterlife Technology

Resurrecting Memories: The Ethics of AI Afterlife Technology

A few months ago, my family experienced a painful loss, and during that time I started noticing a trend

on Instagram Reels where people were using AI to "bring back" their deceased relatives. Seeing

these videos while I was grieving made me wonder whether this technology was comforting or

unsettling. That moment sparked my curiosity and led me to research the ethics and emotional effects

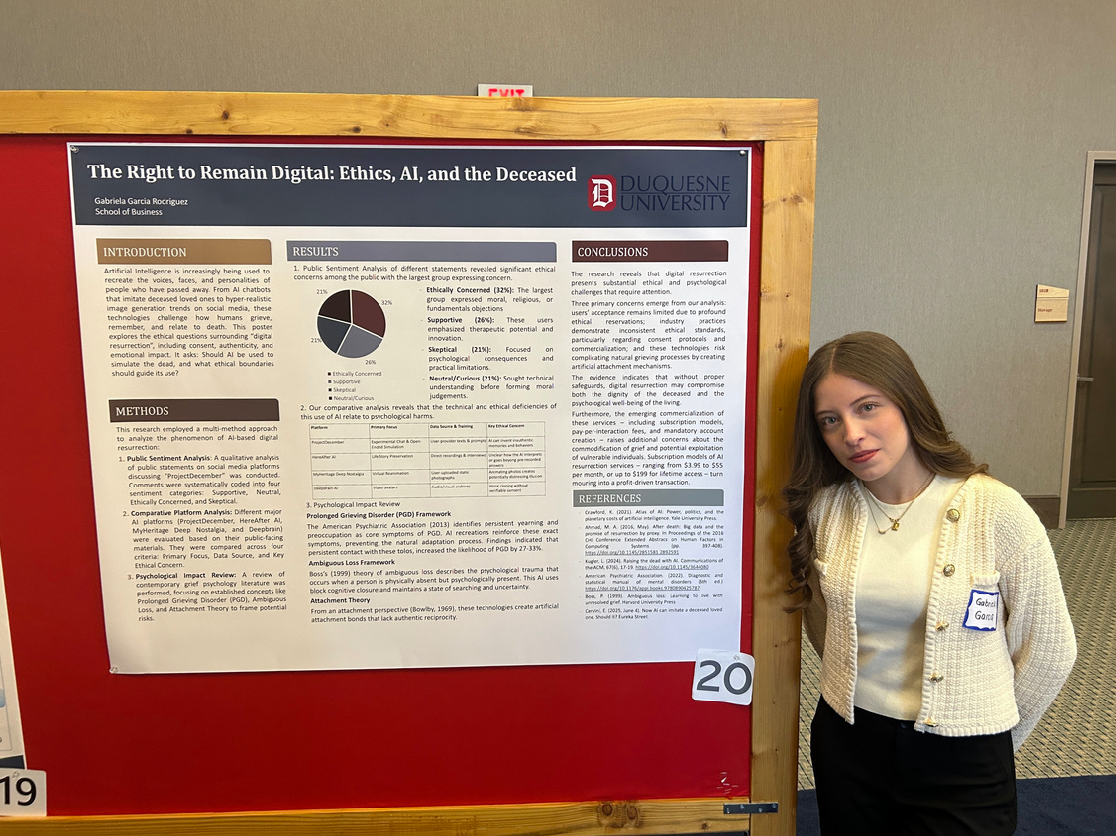

of AI resurrection, a project, The Right to Remain Digital: Ethics, AI and the Deceased, I later

presented at the Grefenstette Center's sixth annual Tech Ethics Symposium, at Duquesne University.

What I found is that entire companies now offer paid services to recreate someone after they die.

Tools like ProyectDecember, HereAfter AI, and Deepbrain AI can simulate conversations or generate

lifelike avatars based on photos, voice clips, or text histories. Some people online find these AI

versions healing, but public reactions are split. In my analysis, 32 percent of users expressed ethical

concerns, while others worried about authenticity or emotional risk. And those risks are real, studies

showed that people who maintain digital interactions with a deceased loved one have higher rates of

intrusive grief, reduced emotional closure, and up to 27 to 33 percent slower healing.

Beyond the emotional concerns, this topic raises major ethical questions. Most privacy laws do not

protect a person's data after death, meaning their voice or likeness could be used without their

consent. This made me reflect on my own life too. I'm not sure I would want someone to digitally

recreate me when I'm gone, because memories should remain genuine, shaped by real experiences

rather than algorithms. As AI advances, we need to decide whether these tools truly honor those

we've lost or whether they risk replacing authentic remembrance with artificial versions of the people

we loved.